Not too long ago, a friend who has only done procedural programming asked me to explain object-oriented programming. Today it occurred to me that it might be fun to explain objects in terms of the X-Wing Miniatures Game. [Obligatory disclaimer: this game is owned by Fantasy Flight. I have no rights to it and use it solely because I thought it would be a fun example. Rules may be simplified.]

In the X-Wing Miniatures game, players send fleets of ships against each other until one side is destroyed. Each ship is, naturally, a physical object that we can model with a logical object. How can we do that efficiently?

Base class: Ship

There are certain properties that are common across all ships, regardless of their physical aspects. Each ship has a name and a physical location in space. It has an attack value (how much damage it could potentially do), an agility value (how quickly it can maneuver), and a hull value (how many hits it can take before it is destroyed). It may or may not have shields. We can’t actually play a generic ship; this is just our common attributes. In our code, we can create the Ship class as an abstract class, which can be inherited from (we can have other classes that “are” Ships) but not instantiated (we can’t just create a Ship).

Subclass: Small, Medium, or Large Ship

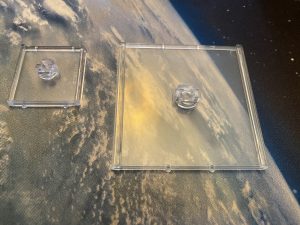

A given ship may be small, medium, or large. While a small ship and a medium ship each have all the attributes of the generic Ship class, they use different size bases (plastic bases, not to be confused with the base class) and follow slightly different rules.

These will also be abstract classes; we can’t actually fly a copy of SmallShip.

Object: The actual ship

When we instantiate an object, we create it in memory and provide values for its required attributes. For example, suppose we wish to instantiate a T-65 X-wing fighter. We create an XWing class which inherits from SmallShip (and thus indirectly inherits from Ship). The constructor sets the ship name (T-65 X-wing) and attributes (3 attack, 2 agility, 4 hull, 2 shields). We also need to assign a pilot to fly the ship, so we’ll instantiate an instance of Jek Porkins (a Pilot object) and associate it with the XWing object.

Jek needs some support, so we’ll instantiate a second XWing object and associate it with a Luke Skywalker Pilot object. The two X-wings were instantiated from the same class and have identical properties (although the pilots will use them in different ways) but they are different objects; what happens to one does not necessarily affect the other.

Using the objects

Now that we’ve instantiated our fleet, we’re ready to send it out to battle some TIE fighters! The Ship class has Move as an abstract method; each of the concrete classes that inherits from Ship must implement this method. We’re in a hurry to blow up some TIEs, so we’ll call xwing1.Move(3) and xwing2.Move(4) to send our small fleet straight ahead into battle. If a pair of TIE fighters zero in on Jek and do four damage, his shields will be wiped out and his hull reduced to two. This doesn’t immediately affect Luke’s X-wing, but he’d better get over there and help…

tldr

With object-oriented programming, we model the system as a collection of objects, each of which has properties (hull, agility) and methods (move, attack). Each object is responsible for keeping track of its own state, freeing the programmer from keeping track of it in an external data structure. A class that inherits from another class “is a” thing of that class. In our X-wing example, an X-wing and a Lambda-class shuttle may have different implementations of the Move() method, but because they are both Ships, we can call any method defined on the Ship class and get the actual method implemented on the associated class. Thus, we can have a function which takes a Ship as a parameter and calls ship.MoveSlowly(), and we can pass that function any object which is instantiated from a class that derives from Ship; we don’t need a separate function for X-wings and TIE fighters (or even for SmallShips and MediumShips).

Now go blow up some TIEs.